What Data Can You Get from a SERP API? Complete analysis

A complete guide to SERP API data, covering Organic results, PAA, Local Pack, Shopping, and Ads modules. Learn what fields you can extract, how to evaluate SERP API performance, and how to design a practical PoC for search data collection.

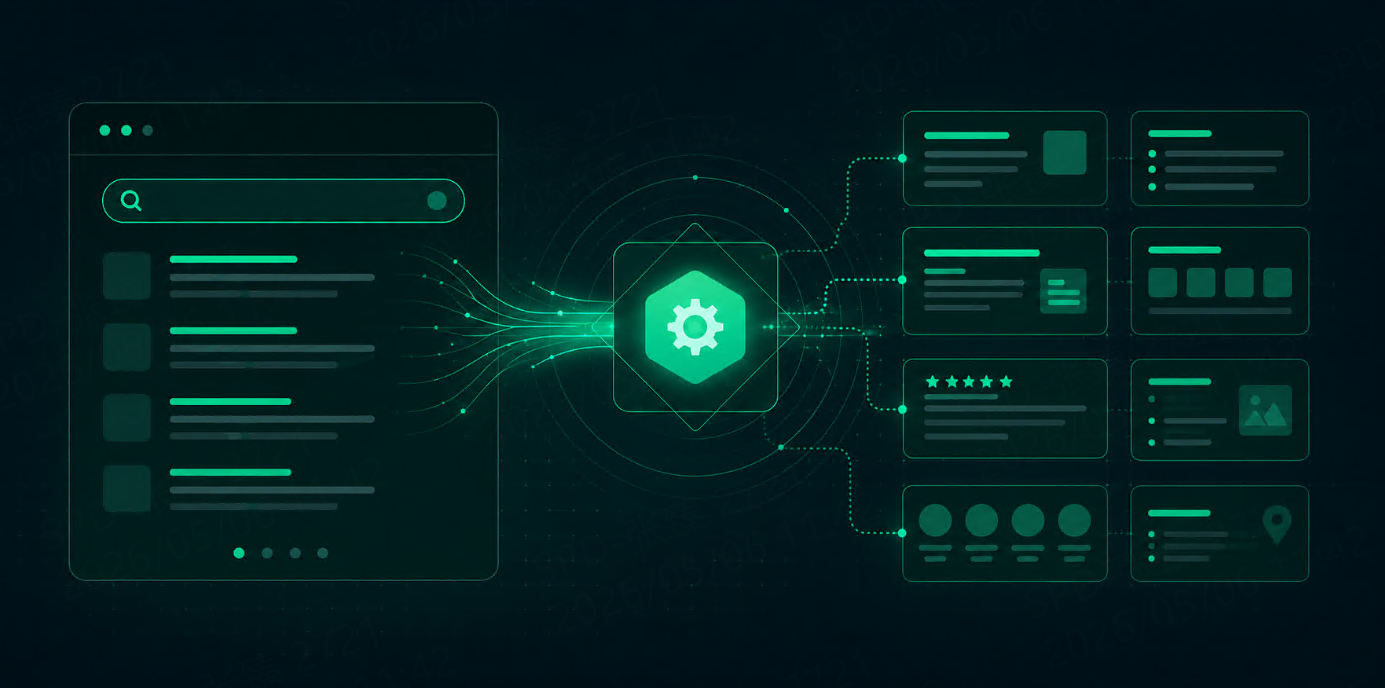

A SERP API is not just a “crawler/proxy with a new name”; it is more like a managed SERP result delivery service. You submit keywords with region/language/device parameters, and it handles proxy rotation, bans/CAPTCHA, and partial structure tracking, returning the search result page parsed into structured fields suitable for database insertion (often you can also get HTML, and in some cases, screenshots).

If you are doing keyword ranking monitoring, SERP feature scraping (PAA/Local Pack/Shopping/Ads), competitor pricing and ad slot monitoring, or collecting search corpora for RAG/training, and have recently encountered 403/CAPTCHA/unstable results:

Preferred SERP API scenario: you want “stable, usable SERP fields” without maintaining anti-bot or parsers long-term.

Better to self-build (residential proxies + own parsing) scenario: you need the “page itself” (full HTML, strong custom parsing, extend to non-SERP pages, second-hop/site-internal/logged-in/complex rendering).

Most common optimal solution: use SERP API for SERP layer to produce rankings and module fields; use residential proxies for deep scraping to fetch landing/detail pages and supplement business fields.

Below, we first explain “what SERP API is and is not,” then provide a module-wise field list and acceptance criteria ready for PoC.

What is a SERP API: you are buying “results,” not “exit points”

Evaluate SERP API as a “results service” to avoid half of the pitfalls.

Inputs you give it

Common inputs include:

query: keyword/search termGeography and language: e.g.,

gl/hl, and country/region/city targeting (implementation varies by service)Device type: desktop/mobile

Pagination and number: page number, results per page, etc.

These parameters are not decorative; they directly affect result appearance, especially Local Pack and Ads.

Outputs you receive (usually one or a combination):

Structured JSON: best for database insertion, monitoring, and alerting (main requirement for most teams)

Raw HTML: for backtracking, supplemental parsing, identifying “which party caused missing/misaligned fields”

SERP screenshot: for audit and dispute review (common in ad verification/brand protection)

What the service typically handles for you:

Proxy pool, rotation, and partial session management

Ban/CAPTCHA handling or bypass (degree must be empirically tested)

Structured parser maintenance (at least for common modules)

Some retry and failure recovery

What you still must handle yourself:

Field definitions: which fields count as “successful samples,” ranking calculation consistency

Quality monitoring: long-term monitoring of field completeness, misalignment, drift

Drift explanation: SERPs inherently have experiments, personalization, and geo drift—API cannot solve “results naturally change”

Compliance and usage boundaries: the service provides capability but does not assume your compliance responsibility

Supplementing missing fields: retain HTML for your own parsing, or introduce deep scraping layer

Three common misconceptions (corrected)

Misconception 1: Buying a SERP API means you can scrape any page

Fact: SERP API is strong for SERPs; for landing pages, detail pages, second-hop, internal pages, logged-in states, complex JS, you usually need a self-built scraping pipeline.

Misconception 2: Structured fields guarantee 100% reproducibility

Fact: Field names may be stable, but field values drift. You must measure “drift rate of repeated requests with same parameters” in PoC and set sampling and alerting strategies.

Misconception 3: Handing it to the service eliminates ToS/compliance risk

Fact: Risk only shifts or mitigates; it does not disappear. You must still assess usage, logs, and data retention.

What SERP API really saves you: “long-term anti-bot and parser maintenance tax”

For small/medium teams, the costliest part of SERP data is usually not a single request but months of maintenance: 403/429, CAPTCHA, structural changes, broken field extraction without immediate detection.

Three questions can help judge if SERP API value is sufficient:

Can the effective result success rate be maintained (not just HTTP 200, but module field usability)?

Are geo/device parameters truly usable (especially local pack targeting and Ads geo consistency)?

Can rich result modules be parsed properly (PAA/Local/Shopping/Ads are the modules most likely to trap self-built teams)?

If any of these are long-term unsatisfactory, either introduce HTML/screenshots for backstop or switch approach—do not expect “it will improve naturally after deployment.”

What search result data can SERP API provide: broken down by module/field family

For database insertion, alerting, and visualization, model by “module/field family” instead of copying vendor documentation by function. Below is a field list for direct PoC acceptance comparison.

Note: Different SERP APIs vary greatly in coverage, especially Shopping/Ads/AI modules; the following is “what you should request,” PoC results take precedence.

1) Organic Results: base for ranking monitoring

Minimal field set (enough to use)

Request dimensions: query, gl/hl, location (or equivalent), device, timestamp

Ranking dimension:

result_position(define clearly: natural position only?)Content dimension: title, snippet, link, displayed_link/website_name

Extensions: sitelinks (if available)

Common pitfalls: “position” may include Ads/Shopping; inconsistent calculation causes false fluctuations

2) PAA (People Also Ask): content/intent expansion and RAG candidate questions

Suggested fields:

questions[]: question list (minimum)

expanded_answer: summary after expansion (may require extra request)

source_url/source_title: reference source (critical for traceability)

Pitfalls: only questions returned, or expansion requires second request → affects cost and workflow

3) Local Pack: local SEO & store intelligence

Suggested fields:

name, rating, reviews_count

address, phone

website

lat/lng or place_id (at least one, for deduplication & map linking)

Critical pitfall: inaccurate geo location; local pack businesses cross-city/cross-region

4) Shopping / Ads: high-value e-commerce & ad verification

Suggested fields:

type (shopping/ad/organic distinguishable)

item fields: title, merchant, price, currency

landing fields: landing_page

position fields: position or block_position

Pitfalls: incomplete coverage, misaligned fields (price parsed into title, merchant missing, landing page truncated)

5) New/unstable modules (e.g., AI Overview): treat as bonus

Treat as “optional”; not main workflow dependency

PoC: track occurrence rate, missing field rate, repeated request differences

Minimum fields for tasks (scanning module → minimal field → purpose → pitfalls)

Module | Minimal fields (usable) | Typical use | Common pitfalls |

|---|---|---|---|

Organic | position, title, link, snippet | ranking/competitor monitoring | ranking calculation inconsistency |

PAA | questions, source_url | content selection, RAG question set | only questions returned, no expansion |

Local Pack | name, address, place_id/coordinates | local SEO, store info | geo drift/cross-city |

Shopping/Ads | merchant, price, landing_page, position | pricing info, ad verification | incomplete coverage, misaligned parsing |

Task-specific advice: frequency and evidence retention

SEO ranking & SERP feature monitoring

Minimal fields: TopN organic results (URL, position), target domain occurrence, PAA/Local/Shopping/Ads presence, module position/count, request parameters (geo/hl/gl/device) and timestamp

Frequency: daily 1× normally; high-competition/peak: every 4–6 hours

Evidence: retain HTML/screenshots of anomalous samples (at least sample), else impossible to explain if SERP changed or parser failed

E-commerce competitor pricing & entry info

Minimal fields: Shopping/Ads (merchant, price, landing_page, position) + organic competitor URLs

Frequency: high-frequency for key categories/brand keywords (hourly sampling), otherwise daily

Key: SERP layer alone insufficient; deep scrape landing/detail pages to supplement actual price, stock, shipping, SKU attributes

Ad verification / brand protection

Minimal fields: Ads entries (display domain, title, landing page, position) + screenshot (strongly recommended) + request params & timestamp

Frequency: daily to hourly depending on risk and budget; start with brand keywords + key regions/devices

Quality baseline: missing fields are worse than delay; define success by “usable result”

RAG / training search corpora

Minimal fields: SERP → source URL (organic/reference), title/snippet, request params & timestamp; retain HTML for sample backtracking if necessary

Frequency: batch collection; prioritize throughput and deduplication; high frequency not always required

Boundary: define usage, retention period, and PII handling upfront

Route selection: SERP API, residential proxies, or hybrid

Do not base selection on “stronger/cheaper” alone. True boundaries:

Do you need result fields or full pages?

Are you willing to pay the “long-term anti-bot & parser maintenance tax”?

Prefer SERP API: you want stable Organic + PAA/Local/Shopping/Ads fields and do not want to handle CAPTCHA, ban recovery, or structure changes yourself.

Prefer self-built residential proxies: you need non-SERP pages (landing/detail pages, internal, second-hop), strong custom parsing, or chain control.

Hybrid (common and stable): SERP layer via SERP API for keyword → structured SERP fields; deep scraping layer via residential proxies for landing/detail pages to supplement business fields.

Cost comparison: unify to “cost per successful result”

Do not look at API or proxy price alone. Define: total cost

per successfully obtained usable SERP result.

Monthly request estimate:

Monthly requests = keywords × daily frequency × pages × 30 × retry factor

Per successful request cost:

Cost per success = (API fee + proxy fee + engineering maintenance allocation) / successful results

Retry factor: use historical logs or PoC data for 403/429 rate, CAPTCHA trigger rate, average retry count.

Minimal runnable pipeline: keyword → alerting (supports two-layer division)

Minimal process:

Task input: keyword + geo/hl/gl + device + frequency + TopN + HTML/screenshot retention

Scheduling: generate tasks by frequency; design idempotent key (keyword+geo+device+date_hour)

Fetch: SERP layer via SERP API (mainly JSON, sample HTML/screenshots)

Standardized DB: unify ranking, module names, request params, timestamp

Alert/dashboard: three types suffice initially:

Target domain drops out of TopN / abnormal increase

Key module disappears/appears (PAA/Local/Shopping/Ads)

Ad anomalies (with screenshot/evidence link)

Hybrid layer handoff: URL queue

SERP layer outputs module fields + candidate URLs (organic, PAA source, shopping landing pages)

Deep scraping consumes by domain limit, dedup, priority fetch

Key: not “can it scrape?” but deduplication key, retry handling, avoid infinite cost burn

PoC acceptance: metrics, thresholds, and stop-loss

Do not decide based on documentation; use your own keywords/regions. PoC ensures “stable, reproducible, cost-effective” is measurable.

PoC sampling:

Keywords: 10–30 (cover local intent, shopping intent, brand, informational)

Regions: 2–3 (include core cities)

Device: desktop + mobile

Repeat requests: 2–3 per day (measure drift)

Duration: 3–7 days (cover workdays/weekend)

Metric A: effective result success rate (success defined by usable fields)

Successful sample: TopN organic usable + key module usable (PAA or Local/Shopping/Ads)

Threshold:

Conservative launch: ≥ 95%

High-frequency/Ad verification: ≥ 98%

Metric B: field completeness and misalignment per module

Do not mix all modules into one metric; evaluate 2–3 key modules:

Local: name/address/place_id (or coordinates) completeness

Shopping/Ads: merchant/price/landing_page/position completeness

PAA: at least questions, ideally source_url

Threshold start:

Core module field completeness: conservative ≥ 90%, ideal ≥ 95%

Misaligned values counted separately; more critical than missing fields

Metric C: geo accuracy and drift rate

Sample manual check: Local Pack matches target city

Drift rate (choose and fix method):

Top10 URL overlap

Module occurrence consistency (PAA/Local/Shopping/Ads)

Stop-loss:

Shopping/Ads critical module missing or misaligned long-term with geo/device correlation unexplained

Local Pack location clearly wrong (frequent cross-city/region)

Uncontrolled repeated drift of same-parameter requests

Self-built chain retries and maintenance make per-success cost unacceptable

Usage/logs/retention policies non-compliant

Conclusion: define route by “fields vs pages,” decide by PoC metrics

Two summary sentences:

If core deliverable is SERP fields (rankings + PAA/Local/Shopping/Ads) and you’ve been frustrated by 403/CAPTCHA/structure changes: use SERP API first to stabilize SERP layer, then optimize.

If core deliverable is page itself (landing/detail/second-hop/internal/logged-in/complex rendering) or you need strong custom parsing and chain control: residential proxies are better.

Stable and realistic: hybrid combination:

SERP layer: SERP API for “keyword → structured SERP fields” and monitoring/alerts

Deep scraping layer: residential proxies for landing/detail pages to supplement price, stock, content, and business fields

Only one thing to do: PoC with your own keywords/regions, measure effective result success rate, field completeness/misalignment, geo accuracy and drift, per-success cost. These numbers are more decisive than any document or reputation.

FAQ

What is a SERP API, and how is it different from self-built scraping?

A SERP API is a managed service that provides structured search result data, including modules such as Organic results, PAA, Local Pack, Shopping, and Ads. It handles proxy rotation, bans/CAPTCHA, and partial field maintenance, reducing the anti-bot, parsing, and maintenance costs of self-built scraping. Self-built scraping is more flexible, allowing you to scrape non-SERP pages or full HTML, but requires a team to maintain anti-bot strategies and parsing rules.

What key fields can a SERP API provide?

The main fields include:

Organic results: position, title, link, snippet

PAA (People Also Ask): question list, source URL

Local Pack: business name, address, coordinates/Place ID, phone, rating

Shopping / Ads: product name, merchant, price, currency, landing page, position

Fields can be selected based on business needs, and HTML or screenshots can be retained for auditing or secondary parsing.

How to conduct a PoC to ensure SERP API stability and reliability?

PoC recommendations:

Select 10–30 keywords covering core cities/countries

Simulate desktop and mobile devices

Repeat requests daily or hourly, measuring success rate, field completeness, and geo accuracy

Record HTML/screenshots as evidence

Key PoC metrics are success rate, field completeness, and geo accuracy to ensure reproducibility and cost control.