Residential Proxies for SERP Scraping and Rank Tracking (2026 Guide)

In this guide, we’ll cover why search visibility scraping gets blocked quickly, how residential IPs support local rank tracking, what setup details matter most, and when residential proxies make sense compared with other proxy types.

Search results are not the same everywhere. Rankings can shift by country, city, device, language, and request pattern. For marketers, SEO teams, and data operators, that makes accurate SERP collection harder than it looks.

This is where residential proxies for SERP scraping become useful. They help teams collect localized search data with lower block risk than many datacenter-based setups, especially when reporting depends on geographic accuracy.

In this guide, we’ll cover why search visibility scraping gets blocked quickly, how residential IPs support local rank tracking, what setup details matter most, and when residential proxies make sense compared with other proxy types.

Why SERP and search visibility scraping gets blocked quickly

Search engines protect their results pages aggressively. Even moderate-volume collection can trigger rate limits, CAPTCHAs, or incomplete responses if the traffic pattern looks automated.

Common reasons SERP scraping gets blocked include:

Too many requests from the same IP

Unnatural request timing or bursty traffic

Mismatch between IP location and search parameters

Repetitive browser headers or user agents

Missing cookies, session behavior, or normal browsing signals

For rank tracking and search visibility monitoring, these issues create two problems:

Access instability: requests fail, stall, or return challenge pages

Data quality issues: rankings appear inaccurate because the results are not truly localized or consistent

This matters for teams monitoring:

Local SEO performance across cities or regions

Competitor visibility by market

Paid and organic search overlap

Marketplace and ecommerce search presence

Brand presence in location-sensitive queries

If the collection layer is weak, the reporting layer becomes unreliable.

How residential proxies support local rank tracking and SERP data collection

Residential proxies route requests through IPs associated with real residential networks. In practice, that can make them more suitable for search monitoring workflows where search engines are sensitive to obvious automation patterns.

For residential proxies for SEO monitoring, the main value is not “magic access.” It is better alignment with how localized requests appear in normal user environments.

Why they are useful for localized SERP collection

Residential proxies can help with:

Geographic targeting: collect results closer to the location you actually want to monitor

Lower detection risk: compared with setups that repeatedly hit search engines from easy-to-flag infrastructure

Rotation support: distribute requests across IPs instead of overloading one address

Session flexibility: maintain a session when needed, or rotate when the workflow requires it

For example, if a growth marketing team wants to compare rankings for “running shoes” in London, Manchester, and Birmingham, location-specific IPs can help produce more realistic local results than a single fixed IP from another region.

That makes geo-targeted SERP scraping more useful for:

Local SEO reporting

Franchise and multi-location brand monitoring

International SEO tracking

Competitor comparison by region

Search visibility analysis for ecommerce catalogs

What matters most: city targeting, rotation, request pacing, headers

Using SERP scraping proxies effectively is less about having proxies in general and more about configuring the workflow correctly.

City and regional targeting

If you track rankings for local search terms, country-level targeting is often not enough. Results can vary significantly by city.

Prioritize proxy infrastructure that supports:

Country targeting

State or province targeting when relevant

City-level targeting for local SEO use cases

Consistent mapping between target market and request origin

If the location is wrong, your ranking data may be technically collected but strategically useless.

IP rotation strategy

Rotation should match the task.

A practical rule:

Use rotating IPs for broader keyword collection across many queries

Use sticky sessions when you need short-term session continuity for a sequence of related requests

Over-rotating can create unnatural behavior. Under-rotating can overload one IP and trigger blocks. The right balance depends on query volume, concurrency, and how your scraper handles retries.

Request pacing

One of the fastest ways to break a rank tracking setup is to send too many requests too quickly.

Good pacing usually means:

Limiting concurrent requests per target domain

Adding randomized delays

Scheduling collection windows instead of constant bursts

Slowing down when challenge rates increase

In practice, teams often set different concurrency limits by market. For example, they may run a lower request rate for a smaller city-level campaign and a slightly higher one for country-level checks spread across more IPs. That helps keep localized collection stable instead of treating every location the same.

Headers and browser realism

Proxy quality alone will not solve fingerprinting issues. Your scraper also needs realistic request behavior.

Review:

User-agent rotation

Accept-Language alignment with the target market

Header consistency

Cookie handling

Device and browser emulation where needed

If you send a request from a Paris IP with headers suggesting a US-English environment, the result may be inconsistent or easier to flag.

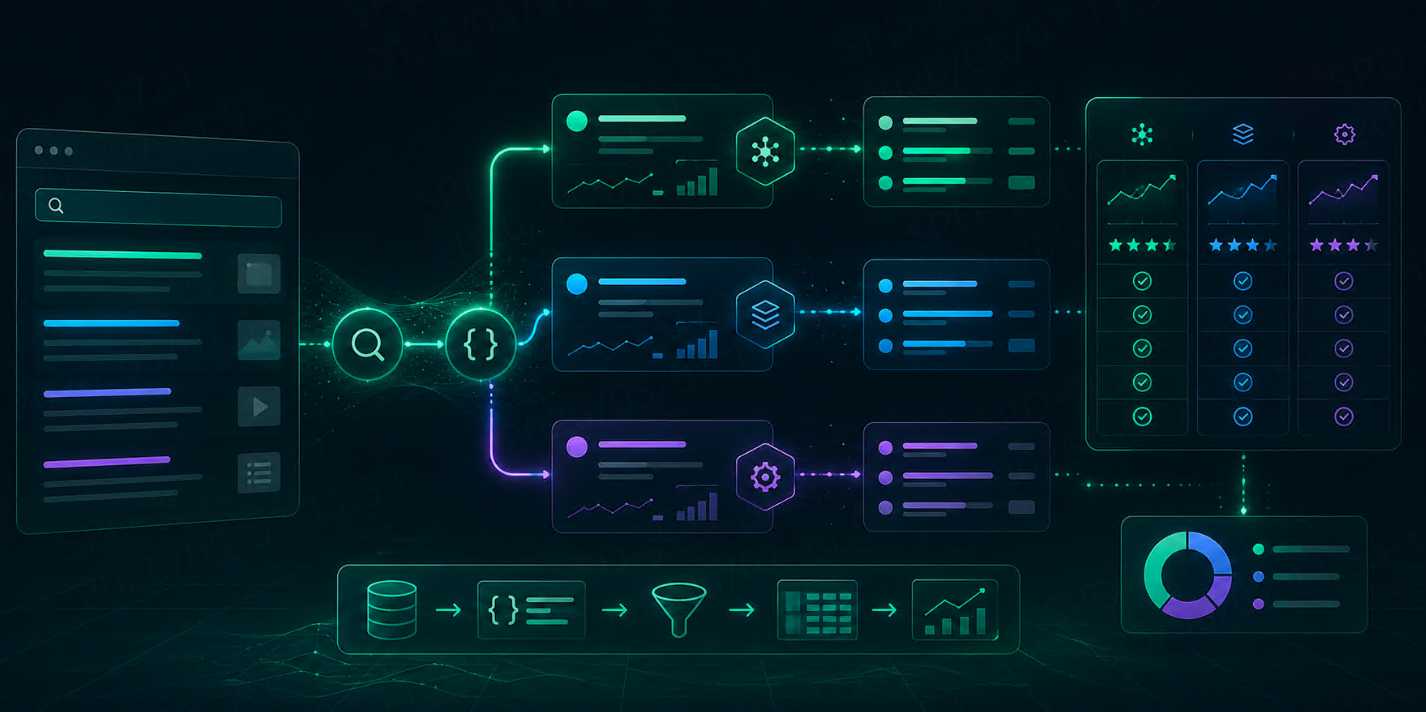

A basic SERP scraping workflow for marketers and data teams

Here is a simple workflow for teams using local rank tracking proxies or building internal search monitoring pipelines.

Step 1: Define the tracking goal

Be specific about what you need to measure:

Organic rankings by keyword

Local pack visibility

Competitor presence

Featured snippets or SERP feature capture

Paid search ad monitoring alongside organic results

This determines the request frequency, geography, and output structure.

Step 2: Segment keywords by location and intent

Group keywords by:

Country

City

Device type

Brand vs non-brand intent

Product category or campaign cluster

This reduces reporting noise and makes proxy allocation more efficient.

It is also worth separating mobile and desktop rank collection when those views matter to the business. A local restaurant chain, for example, may care more about mobile visibility in map-heavy searches, while an ecommerce team may want desktop and mobile tracked separately for product queries.

Step 3: Map each query set to the right proxy location

For accurate residential proxies for SERP scraping workflows, your keyword-location map should be explicit.

Example:

“best coffee shop” → city-level proxy targeting

“buy wireless earbuds” → country or metro-level targeting depending on campaign scope

“nike running shoes sale” → market-specific proxy targeting for ecommerce comparison

A simple spreadsheet or rules table can help here: keyword cluster, target city, device type, language, and assigned proxy pool. That makes troubleshooting much easier when one market starts returning unusual results.

Step 4: Control concurrency and retries

Build guardrails into your collection logic:

Max requests per minute per location

Timeout thresholds

Retry limits

CAPTCHA detection handling

Fallback logic when a query fails

For example, if challenges increase in one city, you might temporarily reduce concurrency there from 5 parallel requests to 2, while keeping normal rates in other markets. That kind of location-specific control is often more effective than slowing down the entire pipeline.

Step 5: Normalize and validate the results

Before sending data to dashboards, validate:

Whether the response is a real SERP page

Whether the location matches the requested market

Whether ranking positions are complete

Whether SERP features were parsed correctly

Whether failed or challenged requests were excluded

Teams often miss the last point. A challenge page may still return HTML, but it is not a valid ranking result. A useful check is to flag pages with CAPTCHA markers, unusually short HTML, or missing core result elements before they enter reporting.

For data teams, validation is often the difference between usable SEO intelligence and misleading rank reports.

Common causes of inaccurate rank tracking data

Many teams assume rank tracking problems come from the parsing layer alone. In reality, collection design is often the bigger issue.

Common causes include:

Wrong proxy geography

If the IP location does not match the market being monitored, your rankings may reflect the wrong audience environment.

Too much request volume from too few IPs

This can trigger challenge pages, partial results, or inconsistent page loads.

Ignoring language and device context

Search results differ based on language settings and device type. Desktop-only assumptions can distort reporting.

No validation for blocked pages

Some scrapers treat challenge pages as valid responses. That leads to broken rank extraction and silent reporting errors.

Inconsistent scheduling

Comparing rankings collected at very different times, under different request patterns, can create false trend signals.

For teams using a residential setup for SEO monitoring, the best results usually come from combining good proxy infrastructure with careful request design and QA checks.

When to use residential proxies vs other proxy types

Residential proxies are not the only option for SERP collection. The right choice depends on sensitivity, scale, and budget.

Residential proxies

Best fit when you need:

Localized SERP accuracy

Lower detection risk in sensitive scraping environments

Flexible geographic targeting

More realistic user-origin simulation for search monitoring

Use cases:

Local rank tracking

Multi-region SEO monitoring

Competitor visibility analysis

Ecommerce search monitoring across markets

Datacenter proxies

Best fit when you need:

Lower-cost infrastructure

Simpler high-volume tasks

Less sensitive targets

Limitations for SERP collection:

May be easier for search platforms to identify

Often weaker for location-sensitive ranking workflows

Can produce higher block rates depending on setup

ISP proxies

Can be useful when you want:

More stable sessions

A middle ground between datacenter and residential behavior

But for highly localized and rotation-heavy search monitoring tasks, residential options are often the more flexible choice.

In short:

Use residential proxies when data accuracy depends on location realism and lower block risk

Use datacenter proxies when cost matters more and the target is less sensitive

Use ISP proxies when session stability is the main concern

How TalorData can help with location-sensitive search monitoring

TalorData provides overseas residential proxies for teams that need location-sensitive search data collection.

For this use case, that is most relevant when you need:

City-level or market-level IP targeting for localized rank checks

Rotating residential IPs for broader keyword collection across many locations

Support for search visibility monitoring where geographic accuracy matters

Infrastructure that fits ongoing SERP data collection for marketing or analyst workflows

That can be useful for:

Growth marketers tracking campaign visibility across cities

Data teams building localized SERP collection pipelines

Ecommerce analysts comparing search presence by market

Scraping operators supporting search monitoring at scale

The proxy layer still needs disciplined execution: realistic headers, controlled request rates, validation checks, and clear location mapping. But when localized monitoring is the goal, residential IPs are often a better fit than generic proxy infrastructure.

FAQ

Are residential proxies necessary for SERP scraping?

Not always. For small or low-sensitivity tasks, other proxy types may work. But residential proxies are often more suitable when you need localized results, lower block risk, and better alignment with real-user network conditions.

What is the difference between SERP scraping proxies and local rank tracking proxies?

The terms overlap. SERP scraping proxies is a broader term for collecting search results, while local rank tracking proxies usually refers to setups optimized for location-specific ranking checks.

Can residential proxies guarantee accurate search rankings?

No. They improve the collection environment, but accuracy also depends on location mapping, language settings, device emulation, request pacing, and validation of the returned pages.

How often should I rotate proxies for SERP scraping?

It depends on your query volume and workflow. High-frequency tasks may need more rotation, while short sequential checks may benefit from temporary sticky sessions. The goal is to avoid both overloading a single IP and creating unnatural request behavior.

Conclusion

Using residential proxies for SERP scraping can make rank tracking and search visibility monitoring more dependable, especially when results vary by location. But proxies alone are not enough. Reliable SERP data also depends on pacing, headers, session logic, device handling, and validation.

If your team needs localized monitoring for SEO, ecommerce, or competitive research, it makes sense to assess whether a residential proxy setup matches your target markets and collection volume.